Introduction

Neuromorphic computing is an emerging field whose objective is to artificially create a storage and a high performing computing device that mimics the memory architecture and learning mechanism of the human brain.

It is different from neural networks which are used in various machine learning algorithms in the sense that it represents brain in hardware form while neural networks represent brain in software form.

In this article, first we will look at current memory technologies, their drawbacks and look into an alternative which solves these drawbacks.

The Von Neumann architecture

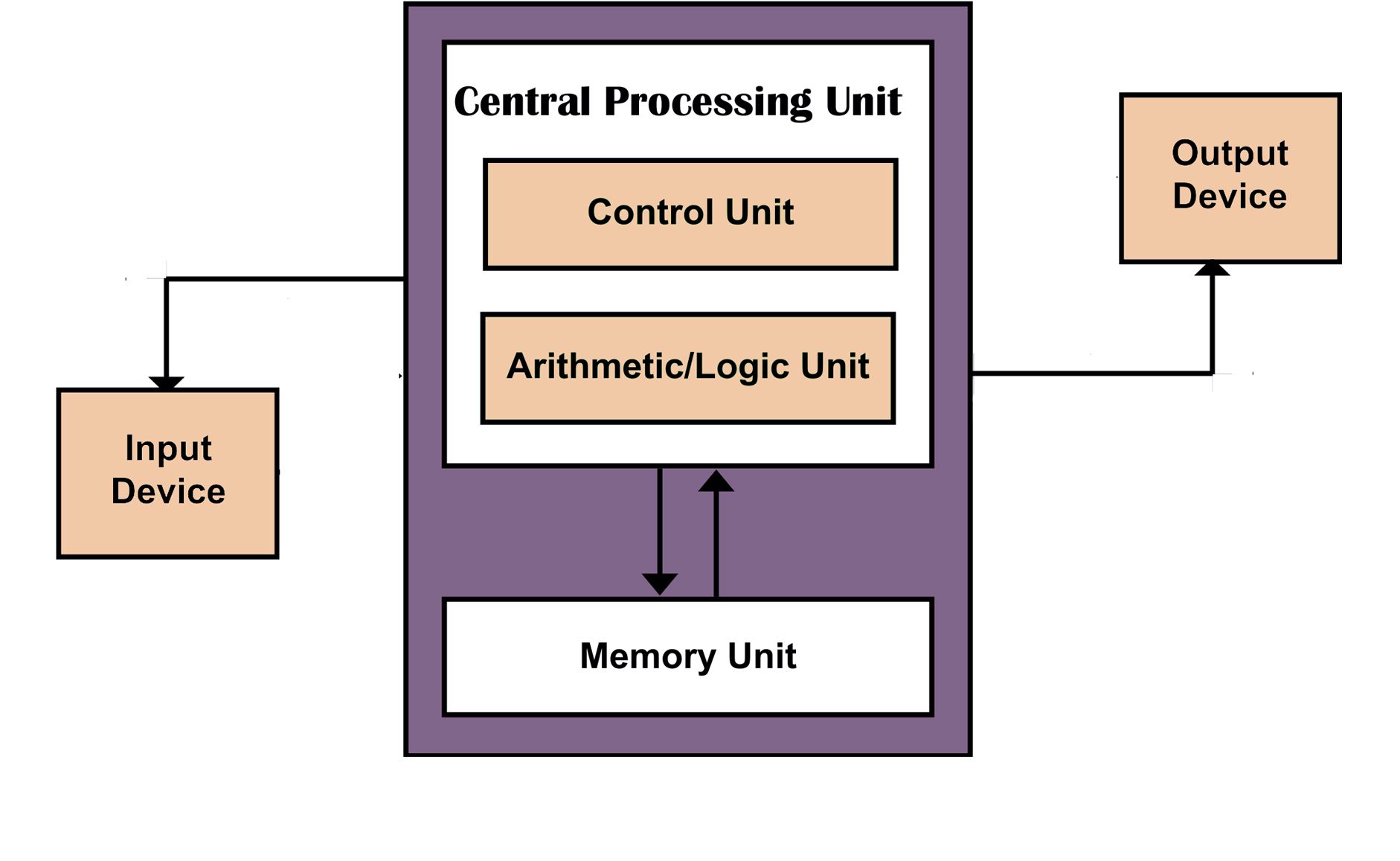

The computing architecture present in the devices used today is based on the Von Neumann architecture named after the polymath John Von Neumann.

The Von Neumann model of computation separates the memory(the unit that stores data) and the processing unit(the unit that computes on the data given in memory).

The devices we use today have two kinds of memory: RAM(Random Access Memory) and memory. RAM is volatile, i.e. the memory stored inside it will be erased as soon as the power supply is switched off and therefore it is more suitable to be used for computation purposes. On the other hand the flash memory is volatile, i.e. it can retain memory even after switching off the power supply and therefore it is more suitable to be used for storage purposes. But RAM performs read and write operations at a much faster rate than flash memory. The combination of RAM and flash in your device is what enabling you to read this article.

The Von Neumann bottleneck

A limitation of this architecture is that during the operation the processing unit will be idle for a short duration of time when the memory is being accessed. This is called the Von Neumann bottleneck. This problem arises because the architecture separates the logic and the memory unit.

But this is not how the neurons and synapses in our brain function. Neurons not only process the information, but also store it.

Need For Alternatives To Current Memory Technologies

Moore's Law

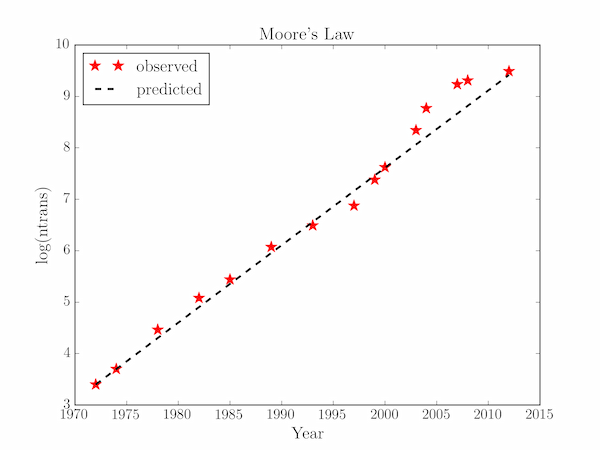

To understand why is there need to find an alternative way to perform computations, it's important to understand Moore's Law.

Moore's Law is an observation, more precisely a prediction, that the number of silicon transistors that can be packed in a dense IC(Integrated Circuit) will approximately double every two years. This prediction, given by Gordon Moore, has been accurate since 1975.

This exponential increase in number of transistors on a chip is possible due to reduction in size of transistors every year thereby improving the computation power of an electronic device with each new model.

But this continuous scaling of transistor size has a limit. Researchers say transistors of size smaller than 5 nm(nanometers) will not function as we would expect them to due to effects of quantum tunnelling thereby rendering them useless. Currently, the smallest transistor in use is of size 7 nm.

Applications in training ML models

Another field that can benefit from improved computation is Machine Learning. Heres a statistic from an article that depicts the large amount of power used to train machine learning models:

...researchers at Nvidia, the company that makes the specialised GPU processors now used in most machine-learning systems, came up with a massive natural-language model that was 24 times bigger than its predecessor and yet was only 34% better at its learning task. But here’s the really interesting bit. Training the final model took 512 V100 GPUs running continuously for 9.2 days. “Given the power requirements per card,” wrote one expert, “a back of the envelope estimate put the amount of energy used to train this model at over 3x the yearly energy consumption of the average American.”

This is where neuromorphic computing comes to the rescue as our brains can perform such computations faster and with a fraction of the power consumption.

Non-volatile Random Access Memory

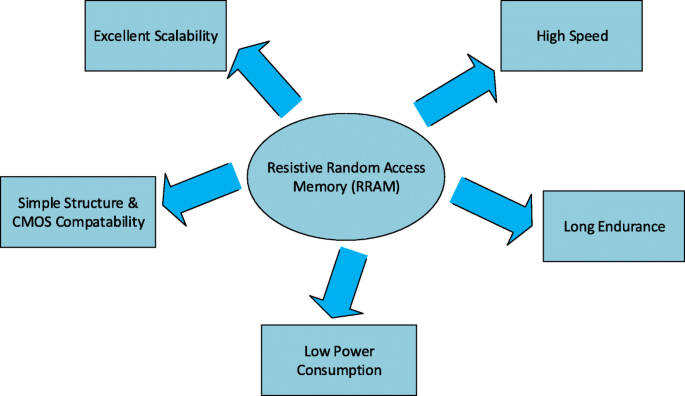

To overcome the drawbacks of currently used memory technologies, we need a device that can non only perform faster read and write operations but can also store information after switching off the power supply. A device that is able to do the above functions and which of course will be used in neuromorphic computing is Resistive Random Access Memory(RRAM).

Resistive Random Access Memory

RRAM comprises of a metal oxide dielectric sandwiched between two electrodes. The resistance of the dielectric can be changed by applying a suitable voltage across the electrodes. It is a type of memristor technology i.e. the device has the ability to recall the value of resistance before it was shutoff thus giving it memory.

Working of RRAM

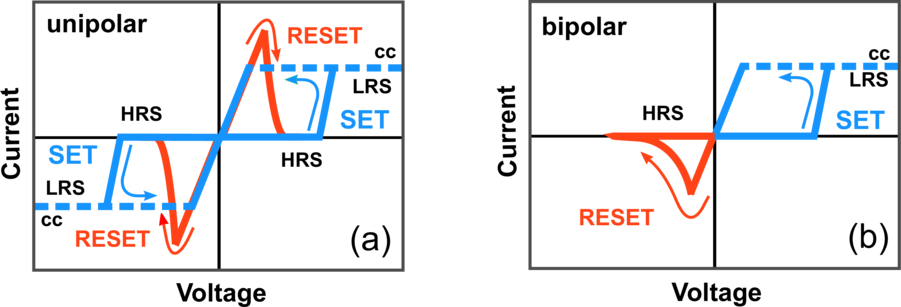

RRAM devices work on the resistive switching process in which the resistance state of the device can be varied between the high resistance state and the low resistance state(the limits of resistance) by controlling applied voltage.

If both the positive and negative voltages are essential to switch resistance state between high resistance state and low resistance state, such type of resistive switching can be termed as bipolar resistive switching.

Research at IIT Hyderabad

Dr Surya Narayana Jammalamadaka is an associate professor at IIT Hyderabad. He has been working in the field of Device Physics and has worked with magnetic materials for a long time

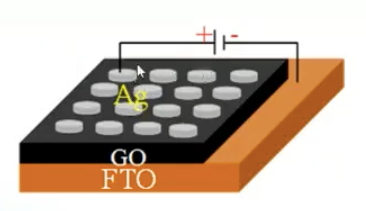

Dr. Jammalamadaka along with his team at IIT Hyderabad, has proposed a graphene oxide based RRAM device. They used silver(Ag) and Fluorine-doped Tin oxide as electrodes and graphene oxide between them to make the RRAM device.

The above described RRAM device operates on bipolar resistive switching. Graphene oxide consists highly conductive sp3 hybridised carbon atoms and less conductive sp2 hybridised carbon atoms.

On application of suitable electric field, the sp3 hybridised carbon atoms can be converted to sp2 hybridised carbon atoms. This causes the change in the conductivity of the device with applied voltage and one can tune between low and high resistance state.

The above device showed positive endurance as well as retentivity characteristics.

Conclusion

The neuromorphic devices are still in research phase and it may take time to mass produce them and introduce it to the general public. But the idea is clearly innovative and solves the drawbacks of the traditional computing devices.