As it is known, machines started ‘learning’, a while back.

But what even is 'machine learning' or a ‘machine learning algorithm’? As it turns out, ‘algorithm’ is just a set of instructions given to the computer/machine to perform a task, we need to give these instructions in a very (painfully) clear manner. Machines can’t be given instruction in most human languages, as they’re not ‘precise’ enough, for example, a simple sentence like “Bring me a glass of water.”, has many unsaid details, like, the person to which the instruction is given has to be determined via eye-contact, or some predefined ‘power-structure’ of sorts, similarly meaning of ‘glass’ has to be ascertained, here it refers to a container, and not the transparent material, similarly the relative temperature (hot/cold and to be precise, ‘hotter or colder’ than a ‘given temperature’) of water required, has to be estimated from the current season and the age of the person asking. And even more importantly, we need to specify it’s supposed to be drinking water!

So to make a machine do the above task, we need to clearly specify each and every nitty-gritty detail, along with conditions on when to do what (an example condition can be, when the relative temperature (‘hot/cold/regular’) is not specified by the speaker, bring cold water ‘if’ it’s summer and the person isn’t old, ‘else’ bring regular water). But as evident, writing programs for many desirable tasks, hence becomes ridiculously difficult, too many conditions and too many factors.

This is where ‘learning’ comes in, as apparent from the above discussion, our brain makes these numerous, extremely complex deductions, subconsciously and executes (almost) flawlessly, the task at hand. This happens, because the brain ‘learnt’ all of this, with ‘experience’, it picked up language and got basic idea of ‘conditions’ and ‘decision making’ (like you know already that the afore-mentioned statement is asking for drinking water and not bath water!). If we can manage to ‘teach’ a machine that way, (we give a basic set of instructions and then the machine ‘learns’ by itself) the need to write explicit and exact code manually, can be eliminated, or at least reduced.

In technical terms, a machine learning algorithm is one, in which the ‘performance’, ‘improves’ with ‘experience’. Performance can be quantified in various ways, for example, for a model made to segregate incoming mails into ‘spam’ and ‘non-spam’, the ‘percentage-accuracy’ of the segregation can be used to analyze the ‘performance’. ‘Experience’ in this example, is the number of incoming mails the algorithm analyzed. The algorithm is designed in such a way, that it modifies itself according to the ‘performance’ (for example, if it wrongly classifies a regular mail as spam, the model is ‘penalized’ (modified) to take it into account), this is the ‘training’ of the model (quite clearly, we need to 'train' the model, with a lot of already 'labeled' (like 'spam' and 'non-spam', in the above example) data, to use it on actual data).

This is surprisingly effective; machine learning algorithms can now ‘identify’ objects in photographs, at accuracy comparable to, and at times even better than humans. Various varieties of learning algorithms are now in use, from your social-media feed to multi-billion dollar corporations trying to find the opinions on their product, learning algorithms are everywhere. ‘Natural Language Processing’ (NLP) is an important problem which can be tackled by learning algorithms. It is of considerable importance, as a lot of important data and information available, is in writing, and in rather informal form of writing, a lot many times (like tweets and reviews etc.).

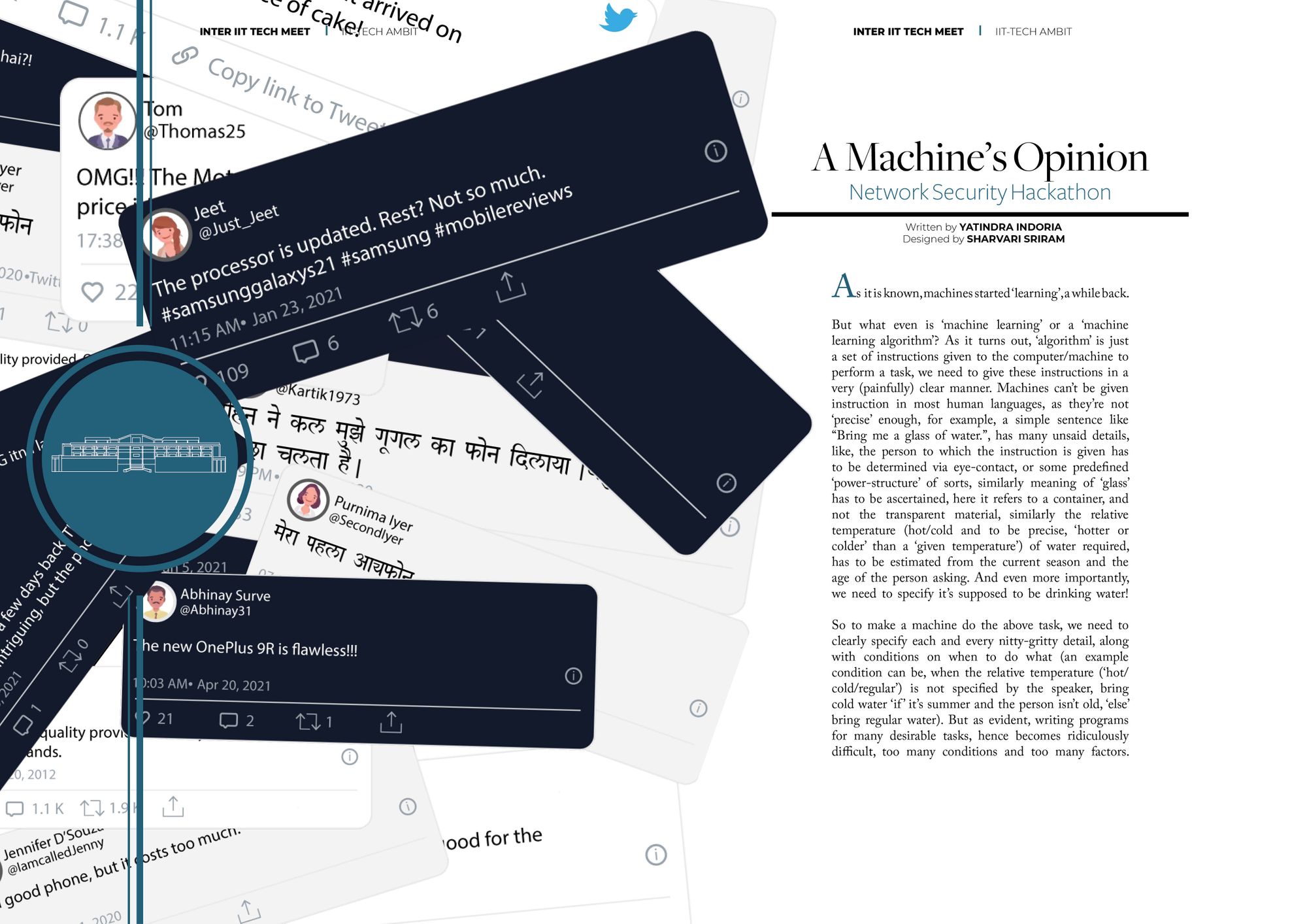

A problem statement was given on NLP, in this year’s Inter IIT Tech Meet, the contestants were given articles and tweets. For the tweets part, they were tasked to identify mobile-tech company related tweets (out of a random collection of tweets), then identify the brand mentioned in the tweet and then perform sentiment analysis (classify the tweet according to the opinion it conveys about the brand, as either negative, or positive, or neutral) on the tweet. For articles on mobile-tech, they were expected to produce an ‘automatic headline generator’. One of the most exhilarating thing about NLP models, is the fact that, they enable machines to do things which are so fundamentally ‘human’ in nature, like analyzing sentiments and producing manuscripts, now we’ll try to understand how this feat is achieved, by the analysis of the approach of the winning team towards the ‘tweet segregation and sentiment analysis’ problem (IIT Guwahati, hearty congratulations!).

Pre-Processing

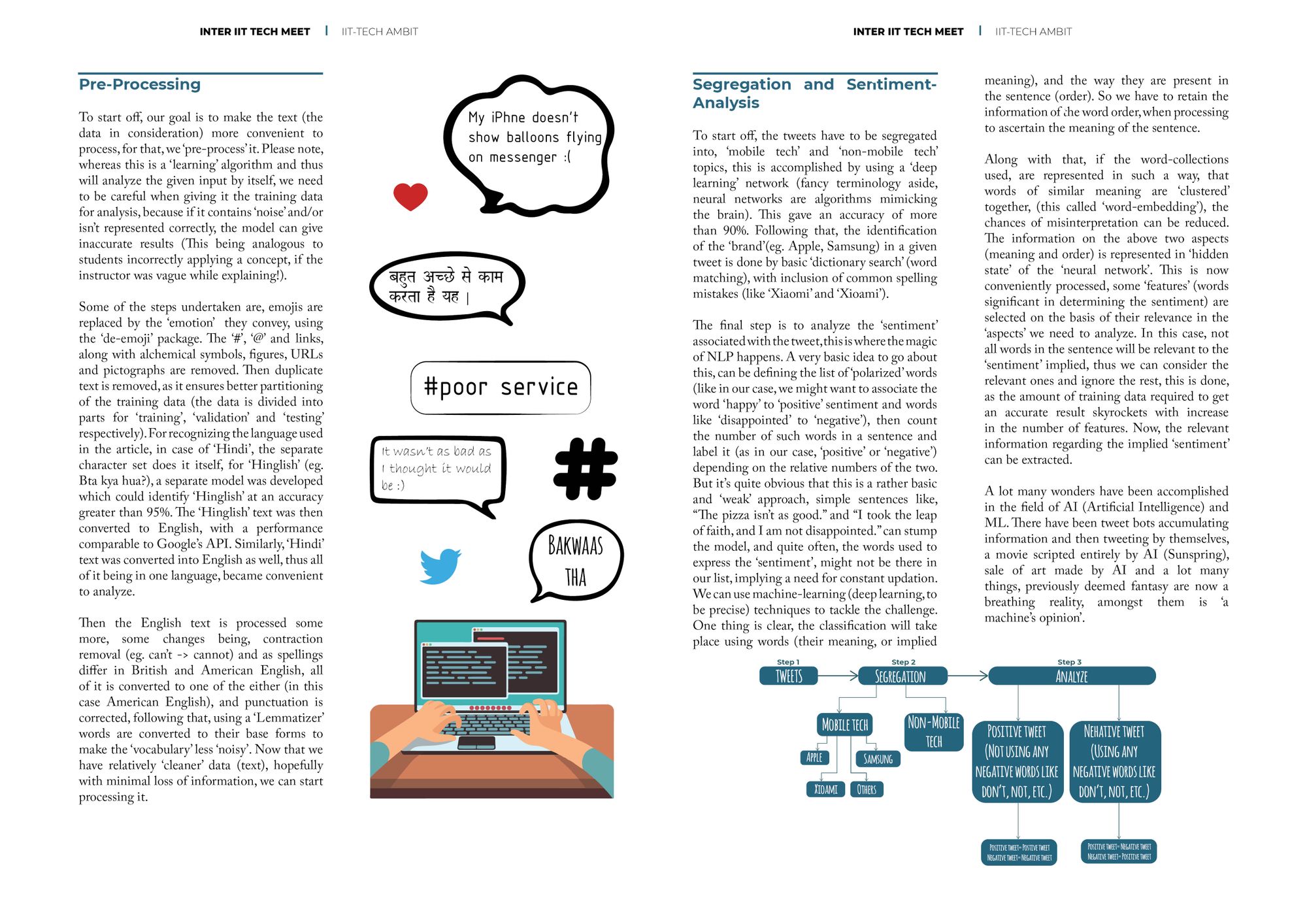

To start off, our goal is to make the text (the data in consideration) more convenient to process, for that, we ‘pre-process’ it. Please note, whereas this is a ‘learning’ algorithm and thus will analyze the given input by itself, we need to be careful when giving it the training data for analysis, because if it contains ‘noise’ and/or isn’t represented correctly, the model can give inaccurate results (This being analogous to students incorrectly applying a concept, if the instructor was vague while explaining!).

Some of the steps undertaken are, emojis are replaced by the ‘emotion’ they convey, using the ‘de-emoji’ package. The ‘#’, ‘@’ and links, along with alchemical symbols, figures, URLs and pictographs are removed. Then duplicate text is removed, as it ensures better partitioning of the training data (the data is divided into parts for ‘training’, ‘validation’ and ‘testing’ respectively). For recognizing the language used in the article, in case of ‘Hindi’, the separate character set does it itself, for ‘Hinglish’ (eg. Bta kya hua?), a separate model was developed which could identify ‘Hinglish’ at an accuracy greater than 95%. The ‘Hinglish’ text was then converted to English, with a performance comparable to Google’s API. Similarly, ‘Hindi’ text was converted into English as well, thus all of it being in one language, became convenient to analyze.

Then the English text is processed some more, some changes being, contraction removal (eg. can’t -> cannot) and as spellings differ in British and American English, all of it is converted to one of the either (in this case American English), and punctuation is corrected, following that, using a ‘Lemmatizer’ words are converted to their base forms to make the ‘vocabulary’ less ‘noisy’. Now that we have relatively ‘cleaner’ data (text), hopefully with minimal loss of information, we can start processing it.

Segregation and Sentiment-Analysis

To start off, the tweets have to be segregated into, ‘mobile tech’ and ‘non-mobile tech’ topics, this is accomplished by using a ‘deep learning’ network (fancy terminology aside, neural networks are algorithms mimicking the brain). This gave an accuracy of more than 90%. Following that, the identification of the ‘brand’(eg. Apple, Samsung) in a given tweet is done by basic ‘dictionary search’ (word matching), with inclusion of common spelling mistakes (like ‘Xiaomi’ and ‘Xioami’).

The final step is to analyze the ‘sentiment’ associated with the tweet, this is where the magic of NLP happens. A very basic idea to go about this, can be defining the list of ‘polarized’ words (like in our case, we might want to associate the word ‘happy’ to ‘positive’ sentiment and words like ‘disappointed’ to ‘negative’), then count the number of such words in a sentence and label it (as in our case, ‘positive’ or ‘negative’) depending on the relative numbers of the two. But it’s quite obvious that this is a rather basic and ‘weak’ approach, simple sentences like, “The pizza isn’t as good.” and “I took the leap of faith, and I am not disappointed.” can stump the model, and quite often, the words used to express the ‘sentiment’, might not be there in our list, implying a need for constant updation.

We can use machine-learning (deep learning, to be precise) techniques to tackle the challenge. One thing is clear, the classification will take place using words (their meaning, or implied meaning), and the way they are present in the sentence (order). So we have to retain the information of the word order, when processing to ascertain the meaning of the sentence. Along with that, if the word-collections used, are represented in such a way, that words of similar meaning are ‘clustered’ together, (this called ‘word-embedding’), the chances of misinterpretation can be reduced. The information on the above two aspects (meaning and order) is represented in ‘hidden state’ of the ‘neural network’. This is now conveniently processed, some ‘features’ (words significant in determining the sentiment) are selected on the basis of their relevance in the ‘aspects’ we need to analyze. In this case, not all words in the sentence will be relevant to the ‘sentiment’ implied, thus we can consider the relevant ones and ignore the rest, this is done, as the amount of training data required to get an accurate result skyrockets with increase in the number of features. Now, the relevant information regarding the implied 'sentiment' can be extracted.

The above is just an illustration of how man-made algorithms can accomplish feats seemingly innate to sentient beings only. A lot many wonders have been accomplished in the field of AI (Artificial Intelligence) and ML. There have been tweet bots accumulating information and then tweeting by themselves, a movie scripted entirely by AI (Sunspring), sale of art made by AI and a lot many things, previously deemed fantasy are now a breathing reality, amongst them, is 'a machine's opinion'.